Training produces Out Of Memory error with TF 2.* but works with TF 1.14 · Issue #39574 · tensorflow/tensorflow · GitHub

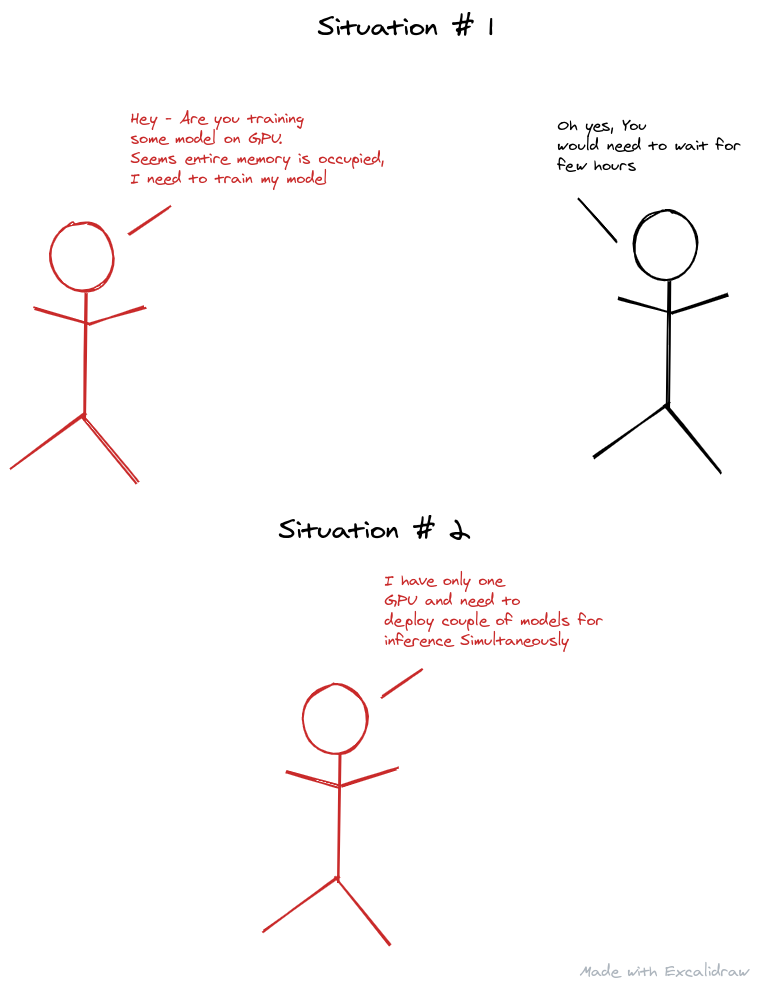

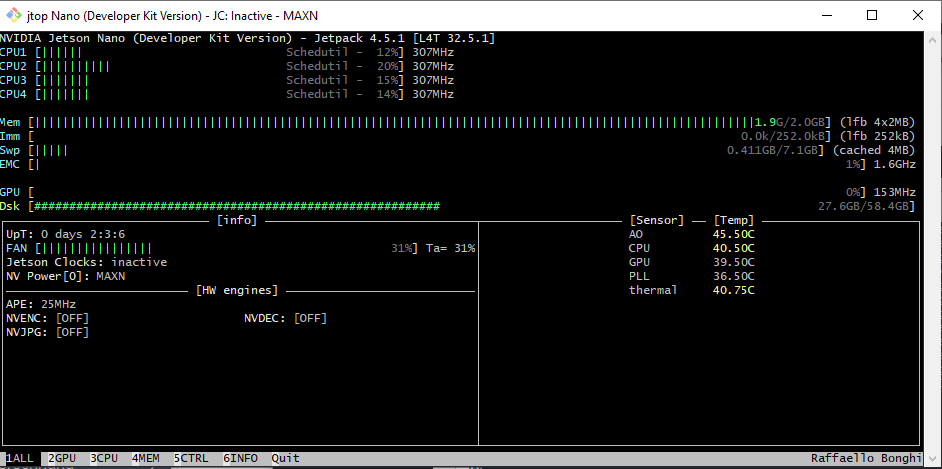

Memory Hygiene With TensorFlow During Model Training and Deployment for Inference | by Tanveer Khan | IBM Data Science in Practice | Medium

Memory Hygiene With TensorFlow During Model Training and Deployment for Inference | by Tanveer Khan | IBM Data Science in Practice | Medium

Allocator (GPU_0_bfc) ran out of memory trying to allocate 17.49MiB with freed_by_count=0. – jentsch.io

Allocator (GPU_0_bfc) ran out of memory trying to allocate 2.39GiB with freed_by_count=0. · Issue #1303 · tensorpack/tensorpack · GitHub

Problem In tensorflow-gpu with error "Allocator (GPU_0_bfc) ran out of memory trying to allocate 2.20GiB with freed_by_count=0." · Issue #43546 · tensorflow/tensorflow · GitHub

CUDA_ERROR_OUT_OF_MEMORY: out of memory with RTX 2070 · Issue #25337 · tensorflow/tensorflow · GitHub

Memory Hygiene With TensorFlow During Model Training and Deployment for Inference | by Tanveer Khan | IBM Data Science in Practice | Medium

Problem In tensorflow-gpu with error "Allocator (GPU_0_bfc) ran out of memory trying to allocate 2.20GiB with freed_by_count=0." · Issue #43546 · tensorflow/tensorflow · GitHub

Problem In tensorflow-gpu with error "Allocator (GPU_0_bfc) ran out of memory trying to allocate 2.20GiB with freed_by_count=0." · Issue #43546 · tensorflow/tensorflow · GitHub

Allocator (GPU_0_bfc) ran out of memory · Issue #12 · aws-deepracer-community/deepracer-for-cloud · GitHub